Agentic AI Beyond the Screen: From Software Agents to Physical Agents

I want to take you on a short journey. Not a journey about the AI hype, or the latest model release. This is a journey about a pattern. A pattern that has repeated itself across the history of computing and technology.

Every time technology became more abstract, more connected, and easier to use, it did not simply improve old software. It created entirely new kinds of companies, new markets, and even new human behaviors. And I think we may be standing at one of those moments again.

The story starts simply. There was a job called Computer, a person who calculates numbers and provides the answers, then we built The Computer. A machine that could compute. Then we wrote programs for it. Now the machine could follow instructions, step by step.

Then software became connected through networks and APIs. Programs could talk to other programs. Software became more modular, more composable, and more networked.

Then came automation. Software no longer just waited for a user to give it a command. It started running workflows, moving information between systems, and handling repetitive tasks semi-automatically.

Then came machine learning models. Software no longer only followed fixed rules. It could detect patterns, classify information, predict outcomes, rank options, and recommend actions.

Then came large language models. This was a much more obvious shift. Suddenly, software could interact with us through our language. It could read, write, summarize, generate, and respond in a way that felt much closer to how humans naturally communicate.

Then came new connection layers like MCP, the Model Context Protocol. Now these models could connect more cleanly to tools, systems, and live context.

And now, we are entering the age of agents. Not software that only waits. But software that receives a goal, uses tools, makes decisions within boundaries, and takes action.

At first, this sounds like a technical evolution. But I think it is much bigger than that. Because every one of these layers changed the kinds of companies that could exist.

The personal computer created software companies.

The internet created online platforms.

APIs created cloud ecosystems.

Mobile phones created App companies

Automation created workflow companies.

Machine learning created prediction engines.

LLMs created AI-native products.

So the real question is not only: What is an agent? The real question is: What happens when intelligence stops being something we use and starts becoming something that acts? On its own?

That is where the story becomes interesting. Until recently, many people looked at serious AI agents as something far away. A beautiful idea? Yes. An impressive demo? Of course. But in the real world? Maybe five years away. Maybe ten.

Then a project like OpenClaw started to change the perception. Whether OpenClaw itself becomes the final winner is not the important point. The important point is that it helped change how people feel about the category.

Agents no longer looked like just an idea. They started to look like a real product category. Something that can connect to tools, operate across interfaces, maintain memory, preserve context, and behave more like an operator than a smart chatbot.

And this is often how the future arrives. Not when the technology becomes perfect. But when perception changes.When people stop asking: “Is this even possible?” And start asking: “Okay, what job can this do next?”

That takes us to the next chapter. AI starts becoming a kind of software employee. Not magic.

Not unlimited intelligence. Not a replacement for every human. But a system that can take over specific and bounded functions: support, scheduling, lead qualification, coding, research, compliance, workflow coordination, and even financial and legal operations.

The shift here is not only from software to smarter software. It is from software as a tool to software as a worker. And once that happens, the next step becomes almost inevitable.

Intelligence starts moving closer to the point of action. Not only in the cloud. Not only in the browser. But at the edge: inside cameras, inside sensors, inside machines, inside vehicles, inside buildings.

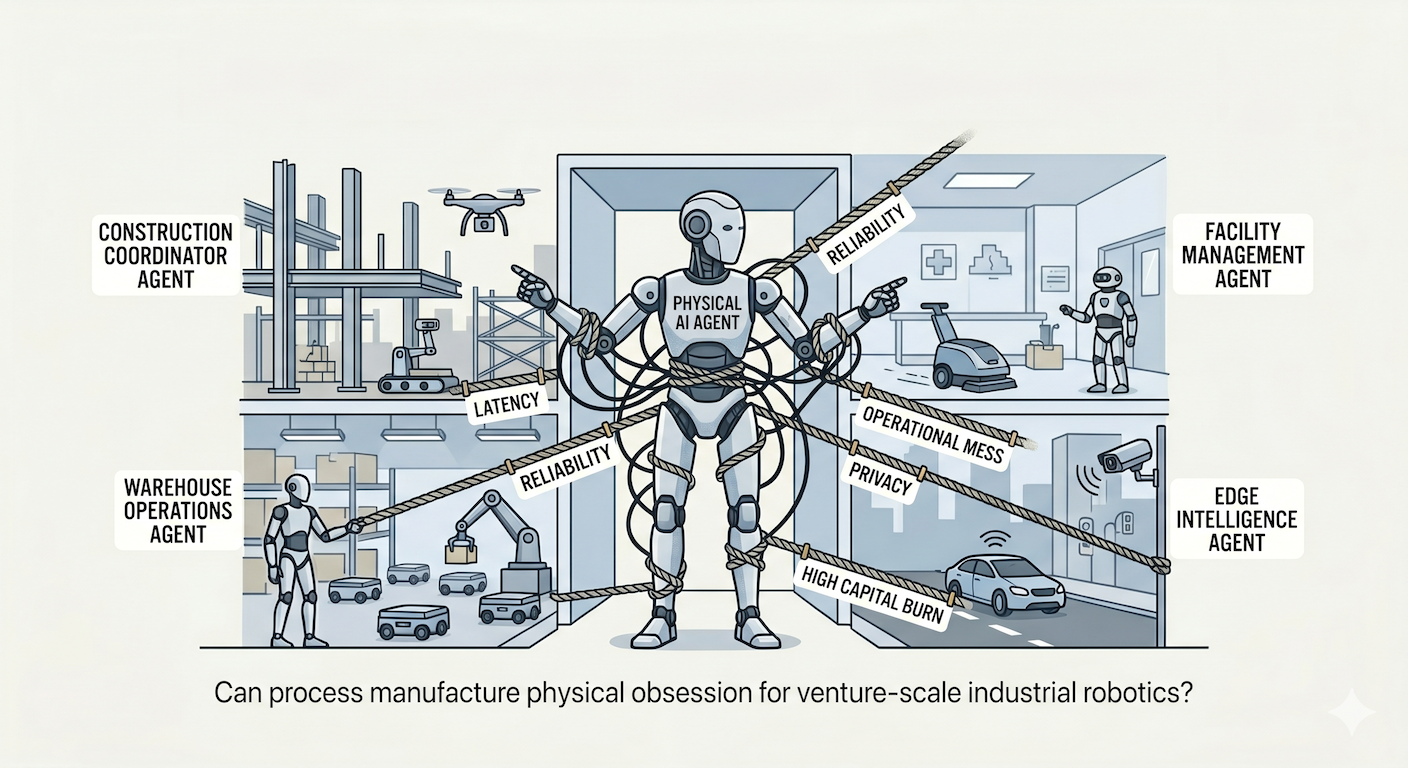

Because when AI starts making real-time decisions in the physical world, latency matters. Reliability matters. Privacy matters. Safety matters. You cannot always send every decision to a distant server and wait for the answer.

So the story evolves again: From intelligence that helps, to intelligence that operates, to intelligence that acts at the point of action. And this is where we reach the part that still feels futuristic: Physical AI. From software agents to embodied agents: self-driving cars, robots, drones, autonomous inspection systems, warehouse machines, construction intelligence, security systems, facility management robotics, etc….

The important point is this: This is no longer just science fiction. Yes, embodied intelligence still feels futuristic. But serious software agents also felt futuristic until suddenly they did not.

This is why I keep coming back to the timing question. When the technical stack becomes ready, when interfaces become simpler, when tools become connected, and when demos start turning into real workflows, the future can move from “someday” to “much sooner than we thought.”

Many industries were built on labor, coordination, supervision, presence, and operations. These are exactly the sectors where software intelligence alone was not enough. But agentic AI, combined with edge intelligence and physical systems, may suddenly unlock entirely new business models.

That matters. Because it means our region may not only adopt this wave. It may become one of the places where parts of this wave are built.

Think about construction. For years, construction tech often meant dashboards, ERP systems, and project management software. Useful? Yes. Transformative? Sometimes.

But now imagine AI site supervisors. Inspection drones. Computer vision safety agents. Robotic layout systems. Scheduling agents that coordinate labor, materials, equipment, and subcontractors in real time.

That is not just better software. That is a new operating model.

Think about hospitality and cleaning. What if cleaning stops being purely a labor business and becomes a business of coordination, autonomy, routing, robotics, and quality assurance?

Think about security. Not only guards and cameras. But autonomous patrol systems, perimeter intelligence, sensor fusion, and systems that do not only detect incidents but respond to them.

This is the deeper shift. Some sectors that once looked too physical, too fragmented, too local, too operational, and too messy for venture capital may suddenly become more programmable than we imagined.

And when an industry becomes programmable, it does not only become more efficient. It becomes investable in a different way. That, to me, is the real opportunity.

In the software era, startups sold tools. In the agent era, startups may start selling workers. Digital workers first. Physical workers next.

So maybe the next generation of great startups will not only be software companies. Maybe they will build AI employees, edge intelligence, and robotic labor. And entirely new operating models for the physical world.

The next big VC category might not be another SaaS tool. It might be physical AI. Not AI that lives only on a screen. But AI that sees, decides, coordinates, moves, and acts. And if the last few years have taught us anything, it is this: What looks five to ten years away can arrive much sooner once the stack is ready.

So the final question is not: Does this sound futuristic? The better question is: Which industries in our region are about to become more programmable than we ever imagined?